The past few years have pushed us into an unusual cultural moment, one in which confidence in what we see and read online has quietly eroded. Nearly half of the public now questions the authenticity of digital content by default, a shift many are calling the Great Trust Recession. Nowhere is this loss of confidence more sharply felt than in insurance, an industry that has always depended on clear evidence, credible narratives, and documented truth. Today that foundation is being undermined by a fast-moving technological arms race in which fraudsters are deploying generative AI to fabricate images, videos, documents, and entire stories with a level of realism that is increasingly indistinguishable from the real thing. For providers such as Admiral in Cardiff, the impact is no longer theoretical. They have reported a steep rise in fraud—up 71% in the last year—fuelled by AI-generated photos of vehicle damage, doctored metadata, and claims for luxury goods that never existed in the first place.

What’s striking is that the challenge is not limited to sophisticated deepfakes. A parallel wave of “shallowfakes”—simple edits made with consumer-grade tools—is flooding travel and property claims. In periods of geopolitical disruption, these low-effort manipulations become even harder to verify, because they are often built on real events and real locations, but with subtle distortions designed to nudge a claim just over the line. The fraud landscape has truly scaled to industrial levels. Tools that once required expertise are now cheap, automated, and packaged for mass use, empowering individuals to generate tailored deception at volume. With millions of deepfake assets circulating each year, insurers are being pushed into an era where the burden of proof is radically higher than the systems they currently rely on.

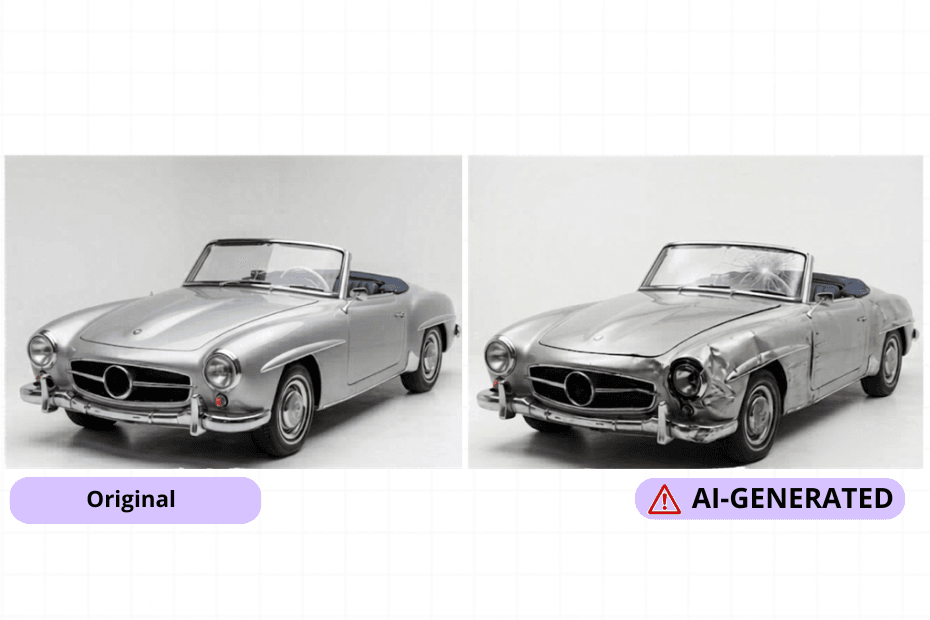

This is the environment in which FraudOps has positioned itself—not as another layer of manual checking, but as a technical counterweight capable of restoring some of the trust that has been lost. Instead of relying on the subjective assessment of an image or document, it applies digital forensics that read far deeper than surface-level realism: pixel-level inconsistencies, improbable lighting behaviour, colour decay patterns, compression artefacts, or biological signals that human observers simply cannot detect. These details matter. More than half of senior leaders now cite AI-enabled deception as their top operational concern, not just because it drives financial loss, but because it threatens a company’s reputation at a time when customer confidence is already fragile. High-accuracy detection isn’t a defensive add-on anymore; it is becoming a core expectation for organisations that want to maintain credibility in an increasingly synthetic information space.

If the insurance sector is to rebuild trust, it must focus on these subtleties—the quiet, technical signatures that distinguish truth from fabrication. By integrating systems that can interrogate evidence at this granular level, insurers can protect genuine customers, filter out the rising tide of synthetic claims, and begin to re-establish the reliability that the digital economy so desperately needs.

Strengthening the investigative backbone behind every claim will be just as important as advancing the technology that screens them. AI may have lowered the cost of creating convincing fakes, but it hasn’t removed the value of disciplined validation. Robust fraud-management controls, consistent desktopping, and structured investigation workflows act as the second line of defence—scrutinising the context, timelines, behaviours, and digital footprints that fabricated media cannot fully replicate. As insurers face an environment where image-level realism is no longer a reliable signal of truth, the organisations that combine high-accuracy detection with rigorous investigative process will be the ones that stay ahead. This is where FraudOps becomes essential, not simply as a detection layer but as the engine that strengthens case handling, enforces auditability, and closes the procedural gaps that AI-generated content depends on. By pairing smarter tools with stronger investigative discipline, insurers can build the resilience needed to withstand the next wave of synthetic deception.